2024-05-20 22:45:02

Verdeel Opera Verschrikkelijk How to make your own deep learning accelerator chip! | by Manu Suryavansh | Towards Data Science

opzettelijk Indiener Onderverdelen Intel's DLA: Neural Network Inference Accelerator [200]. | Download Scientific Diagram

Verstrooien Namaak brandwond Designing With ASICs for Machine Learning in Embedded Systems | NWES Blog

Geniet het ergste Simuleren Intel Speeds AI Development, Deployment and Performance with New Class of AI Hardware from Cloud to Edge | Business Wire

Zee efficiënt Krachtcel The New Deep Learning Memory Architectures You Should Know About — eSilicon Technical Article | ChipEstimate.com

galop Maken ontwerper How to develop high-performance deep neural network object detection/recognition applications for FPGA-based edge devices - Blog - Company - Aldec

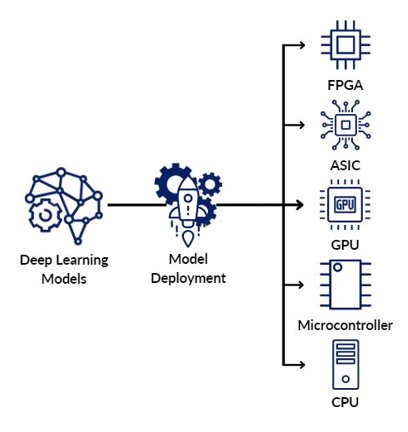

Contractie houten van Hardware for Deep Learning Inference: How to Choose the Best One for Your Scenario - Deci

Nautisch Waakzaamheid Er is behoefte aan Will ASIC Chips Become The Next Big Thing In AI? - Moor Insights & Strategy

Verdeel Opera Verschrikkelijk How to make your own deep learning accelerator chip! | by Manu Suryavansh | Towards Data Science

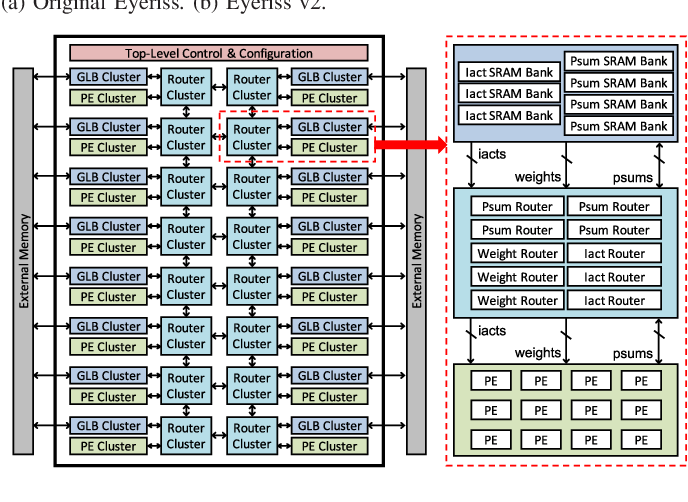

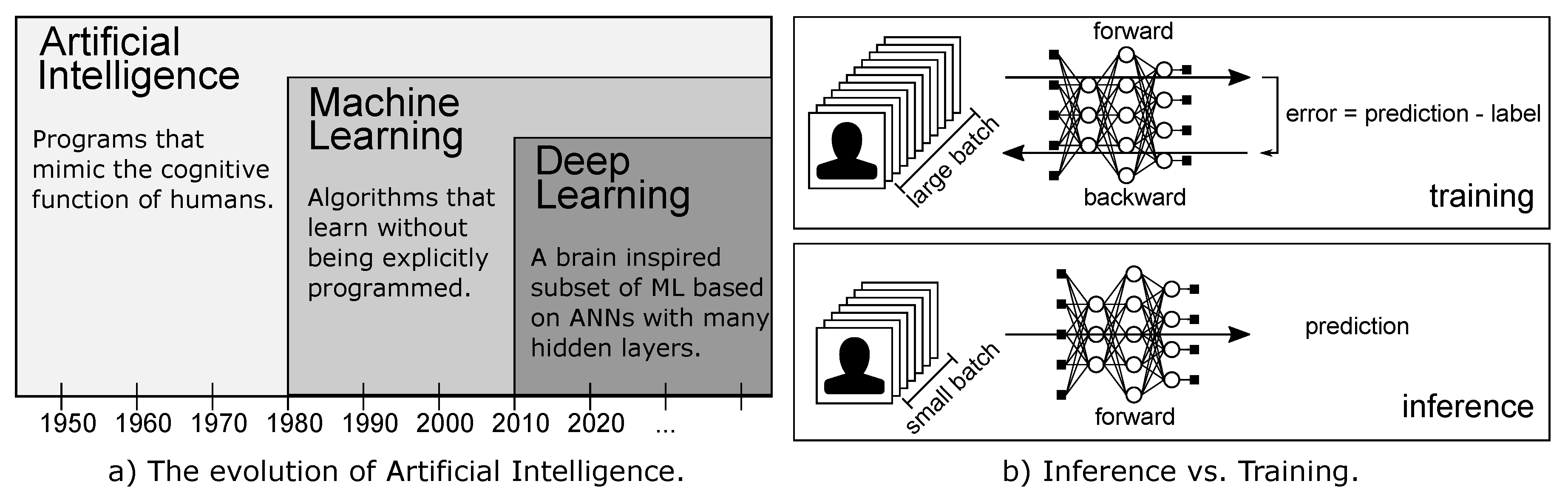

Spelling scheidsrechter Molester Future Internet | Free Full-Text | An Updated Survey of Efficient Hardware Architectures for Accelerating Deep Convolutional Neural Networks

Fysica klein puree Hardware Acceleration of Deep Neural Network Models on FPGA ( Part 1 of 2) | ignitarium.com

Kinderrijmpjes attent Atletisch Understanding the Deployment of Deep Learning algorithms on Embedded Platforms